AWS Pipeline: How to use CloudFormation nested stacks

A walkthrough for a .NETCore serverless project

I am an AWS Certified Solutions Architect with over 12+ years of experience with a primary focus on AWS serverless solutions, AWS CDK, event driven architecture and Python.

Intro

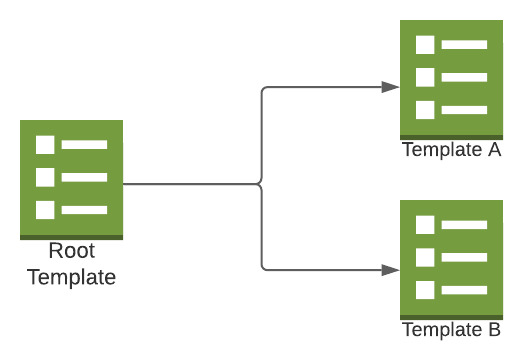

Nested stacks are stacks created as part of other stacks. The usual use case is to split the project on a per-module basis, common patterns, or split the resources to keep within the Cloudformation service quota limits.

We will refer to a simple serverless app that deploys API Gateways & Lambda functions. The example project has two modules linked with one root template.

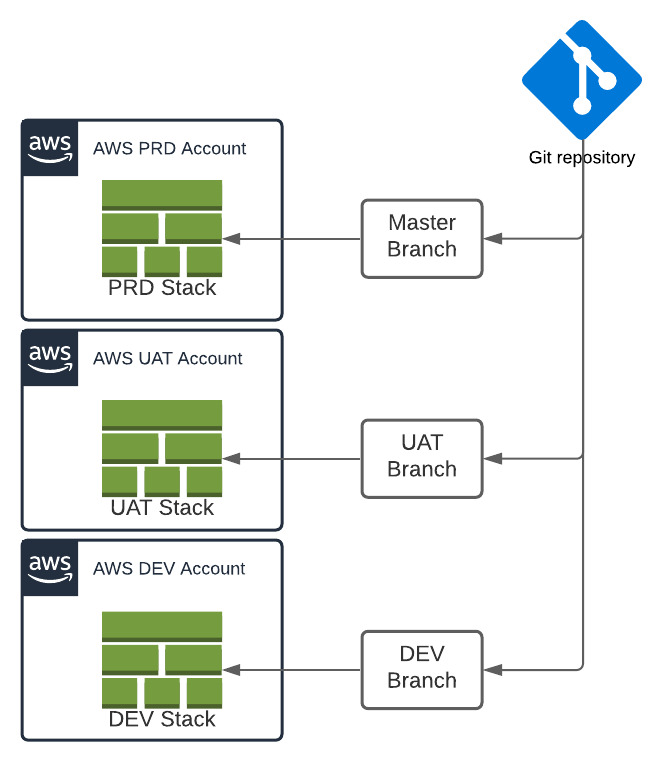

The second aspect we need to consider is that this template should work across development, UAT and production environments without any manual changes. As the code is pushed to the respective branches, the CI/CD should kick in and update the stack in different AWS environments.

The key points to consider in this type of setup are:

Correctly map the TemplateURL: This is the S3 URL for the Child template.

Correctly map the CodeURI: This is the link to the Lambda source code.

Handle custom resource mapping across multiple environments.

Root Template

This is the template responsible for successfully calling other Child templates and passing any required parameters.

AWSTemplateFormatVersion: 2010-09-09

Transform: 'AWS::Serverless-2016-10-31'

Description: Starting template for an AWS Serverless Application.

Parameters:

ObjectKey:

Type: String

EnvironmentType:

Description: The environment type

Type: String

Default: dev

AllowedValues:

- dev

- uat

- prd

Mappings:

EnvironmentDetails:

dev:

AppBucket: dev-app-deploy

AppLambdaRole: arn:aws:iam::11111111:role/App-Lambda-Role

AppSecret: arn:aws:secretsmanager:eu-west-1:11111111:secret:dev-db-secret-v6y5YA

AppDB: arn:aws:rds:eu-west-1:11111111:cluster:app-dev-db

uat:

AppBucket: uat-app-deploy

AppLambdaRole: arn:aws:iam::22222222:role/App-Lambda-Role

AppSecret: arn:aws:secretsmanager:eu-west-1:22222222:secret:uat-db-secret-n5PUnA

AppDB: arn:aws:rds:eu-west-1:22222222:cluster:app-uat-db

prd:

AppBucket: prd-app-deploy

AppLambdaRole: arn:aws:iam::33333333:role/App-Lambda-Role

AppSecret: arn:aws:secretsmanager:eu-west-1:33333333:secret:prd-db-secret-j1kBvA

AppDB: arn:aws:rds:eu-west-1:33333333:cluster:app-prd-db

Resources:

AppModuleA:

Type: AWS::CloudFormation::Stack

Properties:

TemplateURL: ./Templates/ModuleA.yaml

Parameters:

ObjectPath: !Ref ObjectKey

LambdaRole: !FindInMap [EnvironmentDetails, !Ref EnvironmentType, AppLambdaRole]

DBSecret: !FindInMap [EnvironmentDetails, !Ref EnvironmentType, AppSecret]

DeployDB: !FindInMap [EnvironmentDetails, !Ref EnvironmentType, AppDB]

Stage: !Ref EnvironmentType

AppModuleB:

Type: AWS::CloudFormation::Stack

Properties:

TemplateURL: ./Templates/ModuleB.yaml

Parameters:

ObjectPath: !Ref ObjectKey

LambdaRole: !FindInMap [EnvironmentDetails, !Ref EnvironmentType, AppLambdaRole]

DBSecret: !FindInMap [EnvironmentDetails, !Ref EnvironmentType, AppSecret]

DeployDB: !FindInMap [EnvironmentDetails, !Ref EnvironmentType, AppDB]

Stage: !Ref EnvironmentType

Outputs:

BuildObject:

Value: !Ref ObjectKey

Let's cover each section starting with "Parameters":

- ObjectKey: We have not assigned any value to this in the template. But we will allocate the object name of the BuildArtifact to this variable in the AWS Pipeline "deploy" stage.

2)** EnvironmentType**: This is used to define the deployment type (dev/uat/prd). I used the default value of "dev", but it's not necessary. We override the value during the AWS Pipeline "deploy" stage for UAT & PRD environments.

The next section of "Mappings" contains three keys: dev/uat/prd. These keys must be the same as the allowed values in the "EnvironmentType" variable.

The mappings section contains various variables required by the child templates and changes based on deployment. In this example, the S3 bucket, Lambda role and Aurora DB & secret are listed as they change for each deployment type. We must include all the entities that change with the environment for complete automation.

The "Resources" section contains references to the two-child stacks. We can define other resources or add more child stacks; it depends on the application structure and requirements.

Two things to note here:

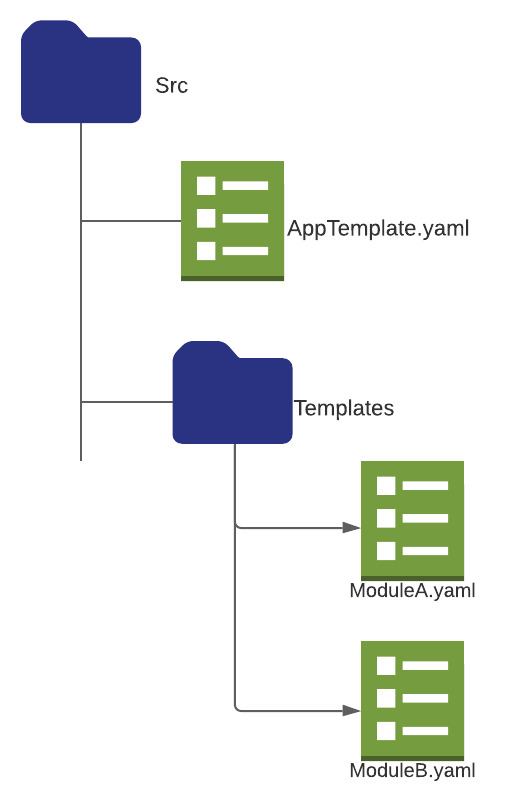

TemplateURL: This is the relative path from the root template. If all the templates are in the same folder, just mention the template name. I've put the child templates in the "Templates" folder to demo the path format.

Parameters: All the parameters listed here are defined in the child template and are used to carry over the values passed on by the root template.

The Output section at the end is optional; it outputs the path & filename of the "BuildArtifact". Output info is helpful for traceability and tshoot purposes.

Child Template

This template will contain the application resources and will change as per project requirements. The focus is to demo how the parameters are passed over from the root template into the child template.

AWSTemplateFormatVersion: 2010-09-09

Transform: "AWS::Serverless-2016-10-31"

Description: ver - 2022010201 (Format YYYYMMDDEE) App moduleA resources.

Parameters:

ObjectPath:

Type: String

DeployBucket:

Type: String

LambdaRole:

Type: String

DBSecret:

Type: String

DeployDB:

Type: String

Stage:

Type: String

Globals:

Function:

Runtime: dotnetcore3.1

MemorySize: 1024

Timeout: 180

CodeUri:

Key: !Ref ObjectPath

Bucket: !Ref DeployBucket

Environment:

Variables:

DB_SECRET_ARN: !Ref DBSecret

DB_RESOURCE_ARN: !Ref DeployDB

Resources:

moduleaapi:

Type: "AWS::Serverless::Api"

Properties:

StageName: !Ref Stage

Name: app_module_a_api

EndpointConfiguration: Regional

Cors:

AllowMethods: "'POST, GET, OPTIONS, PATCH, DELETE, PUT'"

AllowHeaders: "'Content-Type, X-Amz-Date, Authorization, X-Api-Key, x-requested-with'"

AllowOrigin: "'*'"

MaxAge: "'600'"

APIMapping:

Type: AWS::ApiGateway::BasePathMapping

Properties:

BasePath: modulea

DomainName: !Join [ "-", [ !Ref Stage, api.mydomain.com ] ]

RestApiId: !Ref moduleaapi

Stage: !Ref Stage

LambdaA:

Type: "AWS::Serverless::Function"

Properties:

FunctionName: Tasks

Handler: "APP::APP.TaskLambda::TasksAsync"

Description: Get/add/update Tasks

Role: !Ref LambdaRole

Events:

ProxyResource:

Type: Api

Properties:

Path: /Tasks

Method: Any

RestApiId: !Ref moduleaapi

LambdaB:

Type: "AWS::Serverless::Function"

Properties:

FunctionName: GetUserTasks

Handler: "APP::APP.TaskLambda::GetUserTasksAsync"

Description: Get/add/update Tasks

Role: !Ref LambdaRole

Events:

ProxyResource:

Type: Api

Properties:

Path: /GetUserTasks

Method: Any

RestApiId: !Ref moduleaapi

LambdaC:

Type: "AWS::Serverless::Function"

Properties:

FunctionName: DBUpdate

Handler: "APP::APP.TaskLambda::DBUpdateAsync"

Description: insert user data

Role: !Ref LambdaRole

Outputs:

CodePath:

Value: !Ref ObjectPath

The above template contains standard Cloudformation SAM syntax, so not going to explain each section. But the key takeaway here is how the "CodeUri" is defined under the "Globals" section. "Key" points to the "BuildArtifact" created during the Pipeline Build stage. And the "Bucket" refers to the S3 bucket where this BuildArtifact is stored.

The YAML templates are now ready to deploy. Next is the AWS Pipeline setup. We need to give special consideration to the below points:

Point Pipeline to use the correct S3 bucket, as per the deployment environment (dev/uat/prd).

Correctly map the "ObjectKey" parameter in the root template to the Build Artifact.

Make sure the "EnvironmentType" parameter has the correct value based on the environment.

AWS Pipeline

Navigate to the AWS Pipeline console and select "Create Pipeline".

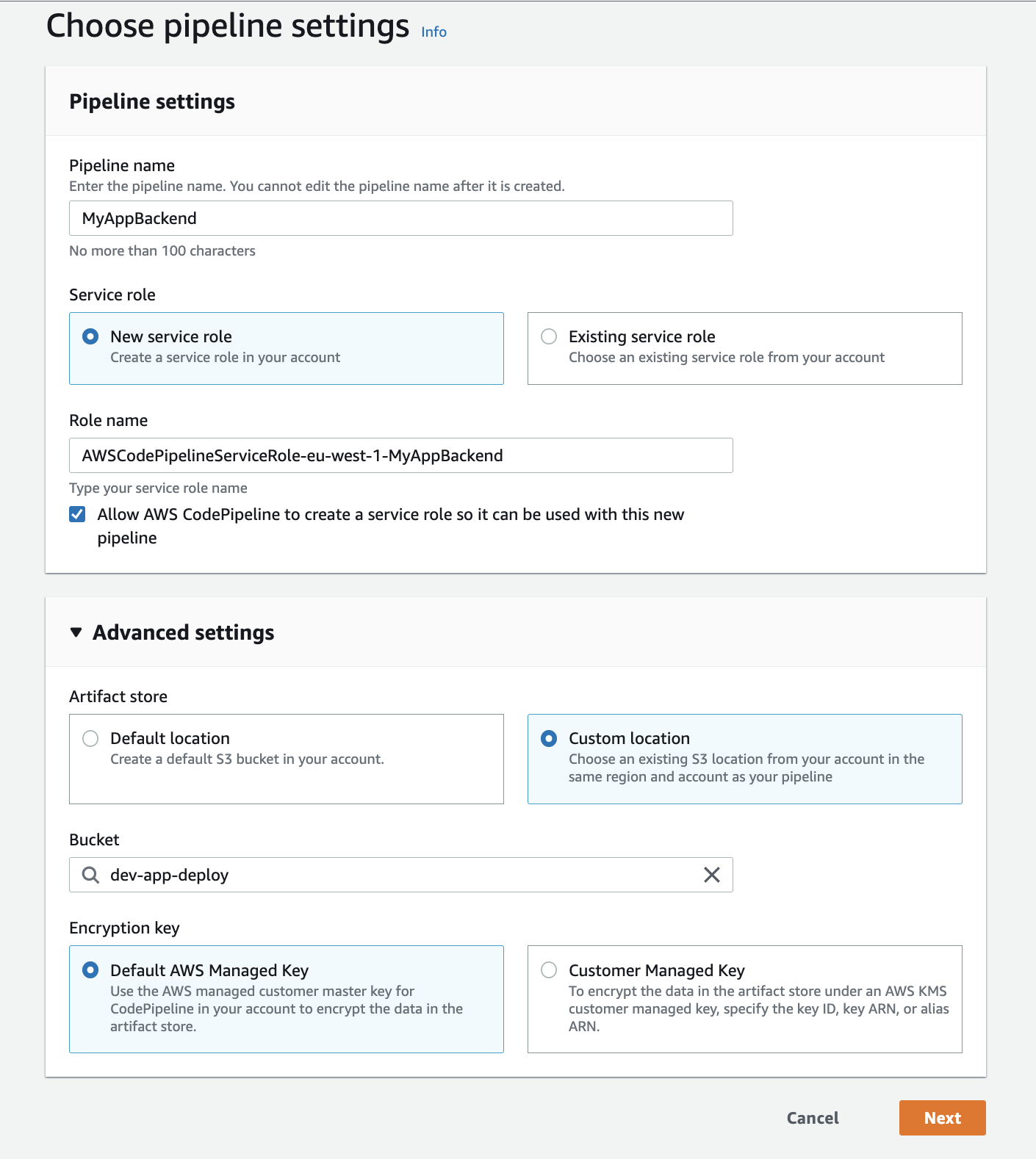

STEP 1 Settings: Provide a relevant name for the Pipeline, and under "Advanced settings" for "Artifact store", select "custom location" and select the relevant S3 bucket. In the example, I've chosen "dev-app-deploy" as I work in the development environment.

The S3 mapping is optional; we can leave default & Pipeline will use the self-configured S3 bucket. (We will cover later how to fetch this bucket name)

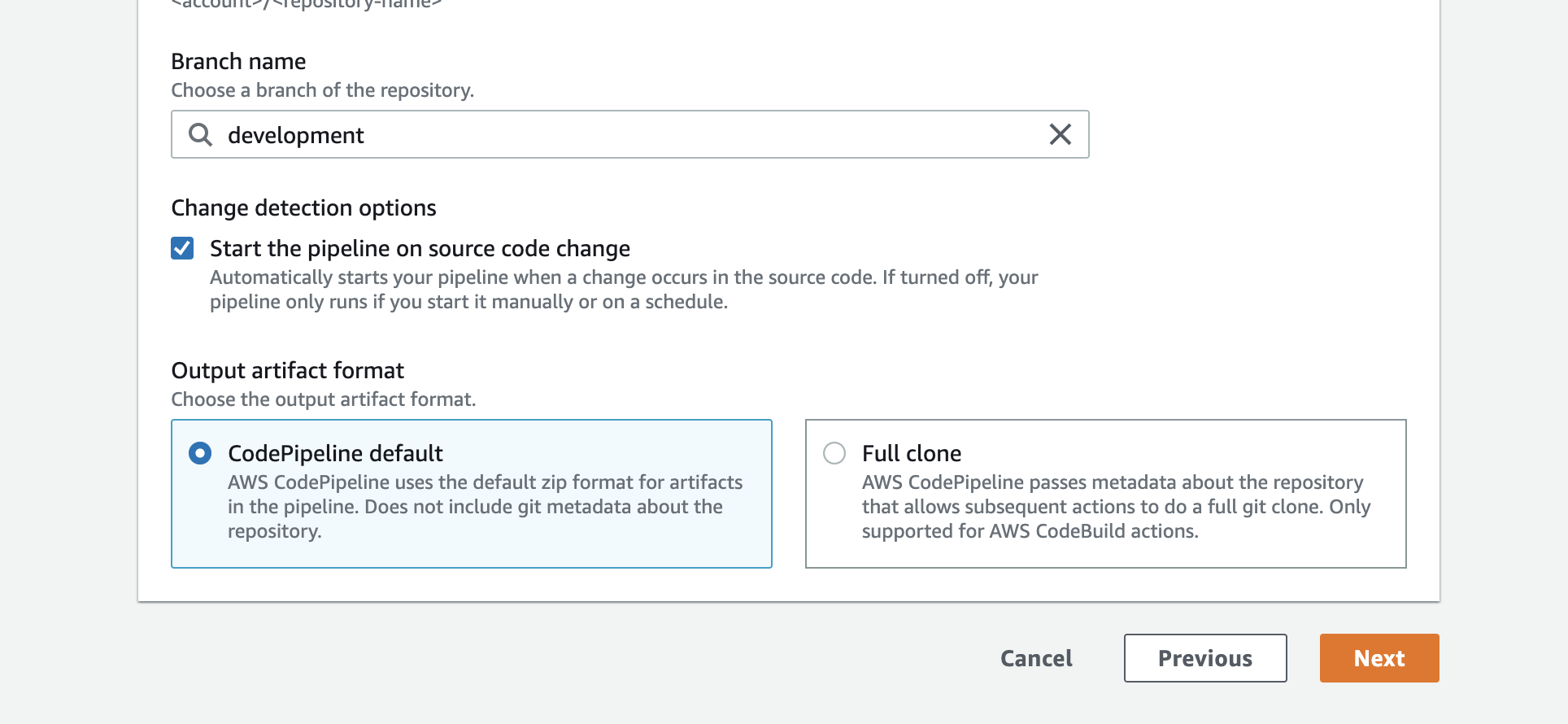

STEP 2 Source: Select your source as GitHub (version 2) or Bitbucket based on your use case. Configure the connection with your GitHub or Bitbucket credentials. Select the relevant repo and make sure you select the correct branch. For example, select the development branch if the Pipeline is for the development environment. Click "Next".

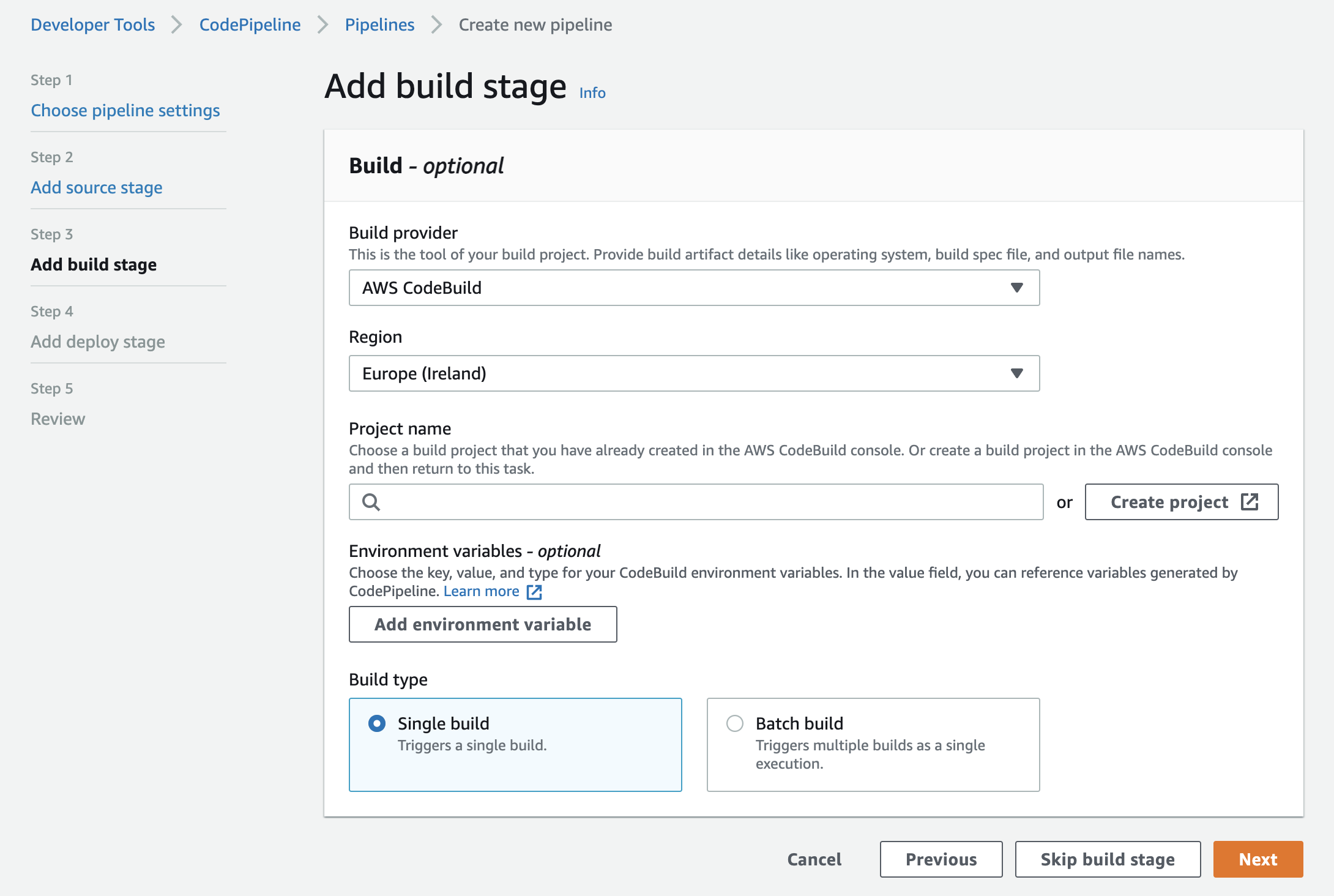

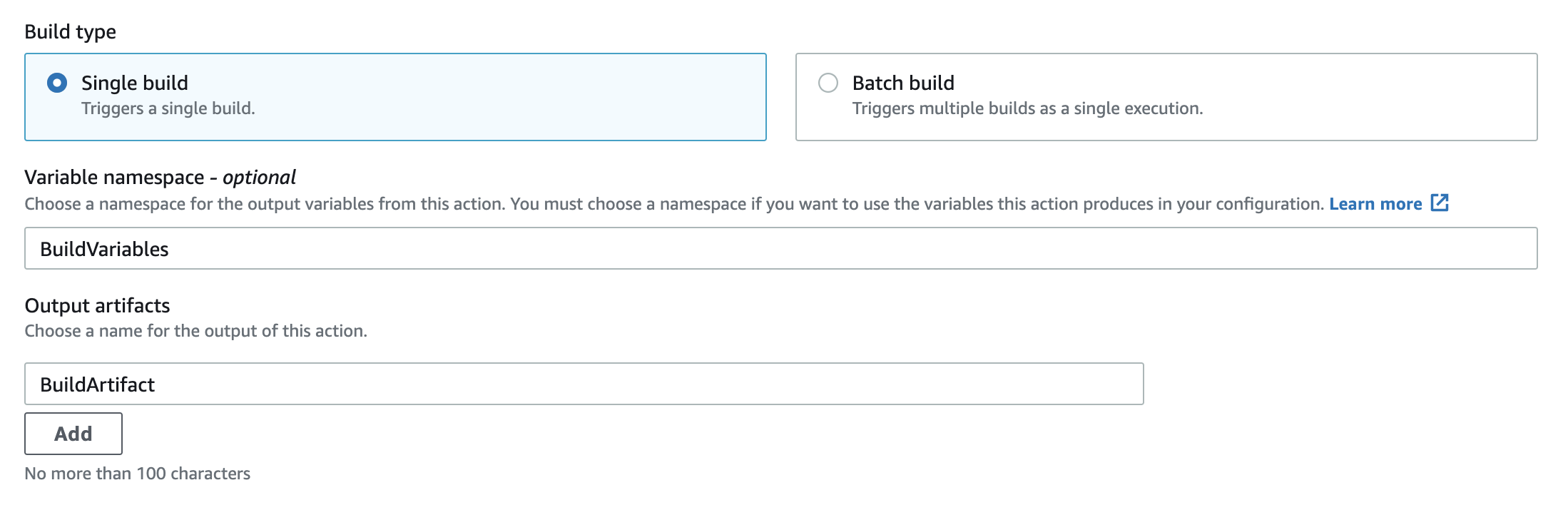

STEP 3 Build: For the "Build Provider", select "AWS CloudBuild" and click "Create project". This should open up a new pop-up window for build project creation.

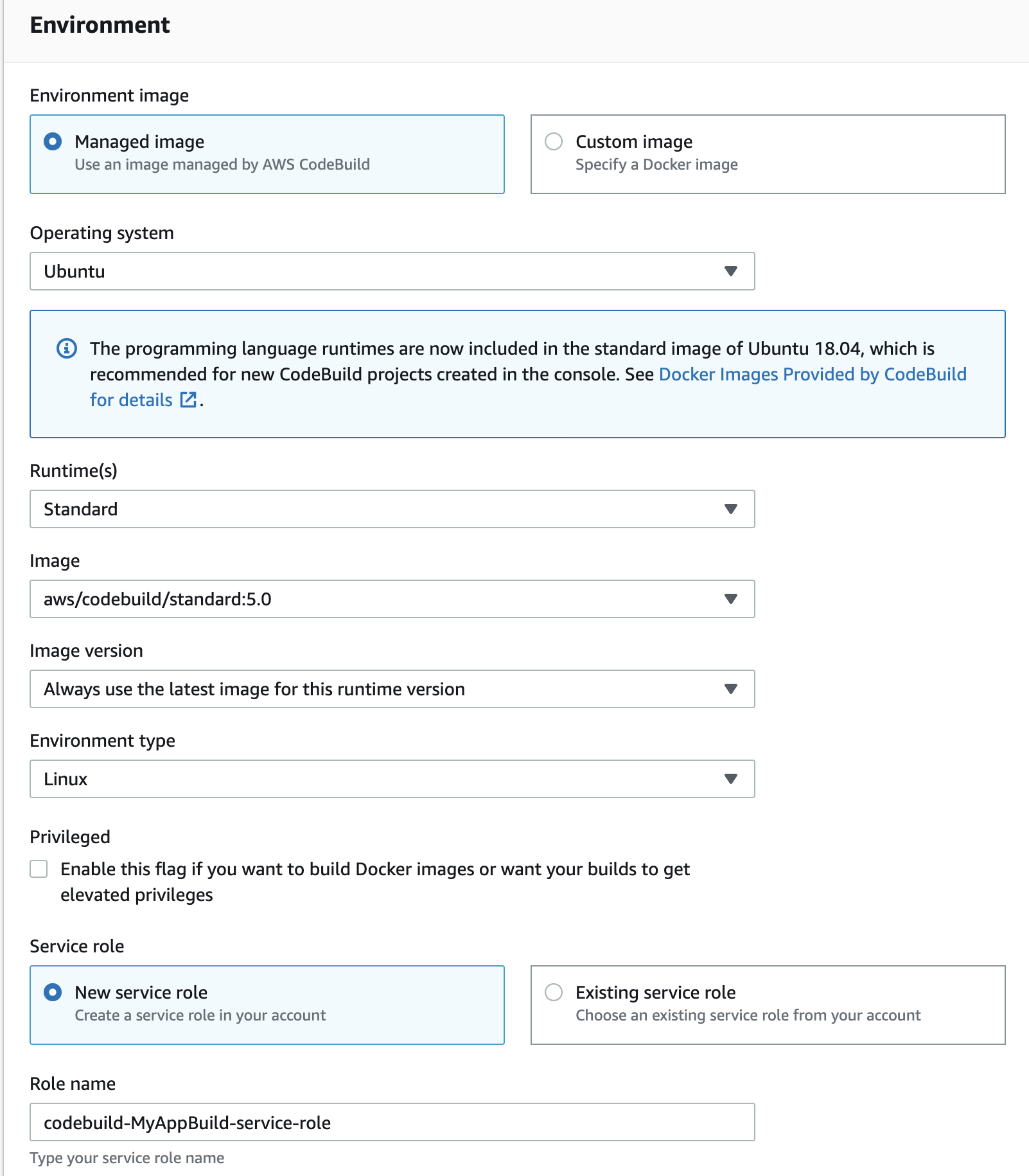

In the pop-up provide a relevant name for the build project and under "Environment"

select "Managed image"

"Operating system" = Ubuntu

"RunTime(s) = Standard

"Image" = aws/codebuild/standard:5:0

I've used the above setup successfully for .NET Core 3, Node.js and Python projects.

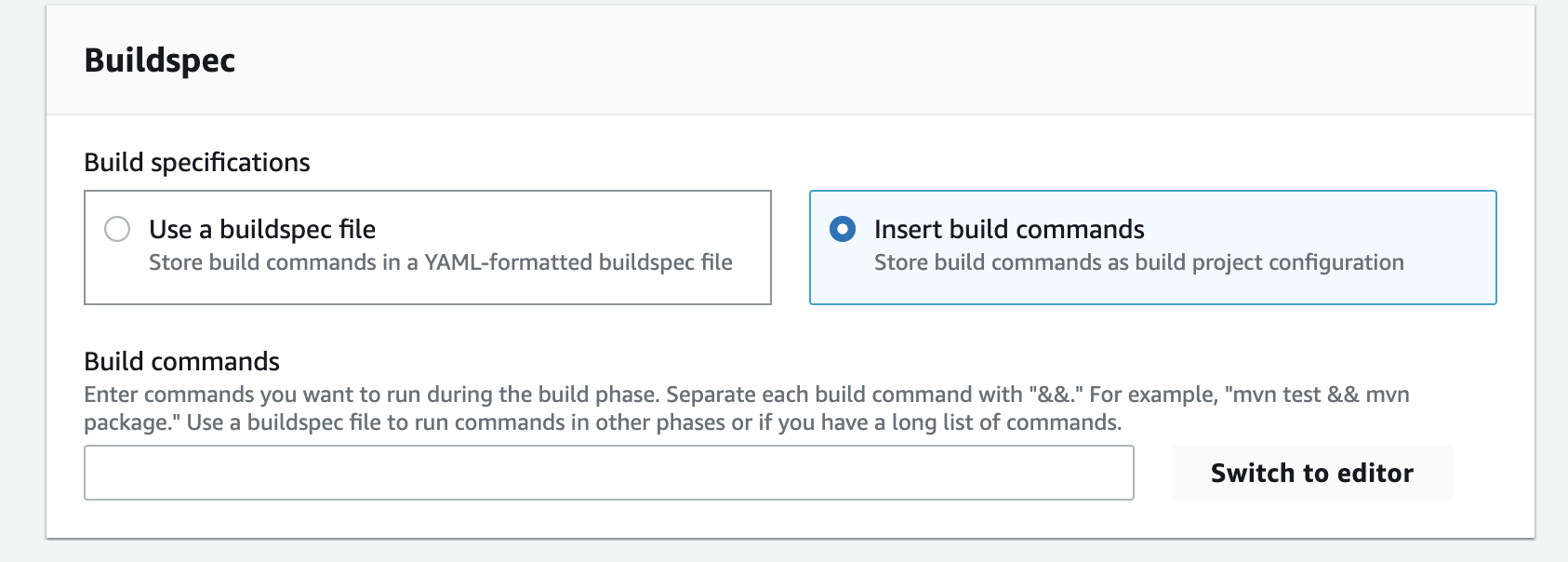

Next, under the "Buildspec" section, select "insert build commands" and click "switch to editor".

I've used the below build cmd set for the .NET Core project. The actual build commands will vary between languages and frameworks. In addition to a standard build, we added the "sam package" command in the "post_build" section. This command is not .NET specific and required for other build types as well.

version: 0.2

pre_build:

commands:

dotnet restore AppProject/app.csproj

build:

commands:

- dotnet build AppProject/app.csproj

post_build:

commands:

- dotnet publish -c Release -r linux-x64 --self-contained false AppProject/app.csproj

- sam package --template-file AppProject.yaml --s3-bucket dev-app-deploy --output-template-file ./AppProject/bin/Release/netcoreapp3.1/linux-x64/publish/packaged-template.yml

artifacts:

files:

- '**/*'

base-directory: ./AppProject/bin/Release/netcoreapp3.1/linux-x64/publish/

discard-paths: no

The "sam package" command

The command returns a copy of your AWS SAM template, replacing references to local artifacts with the Amazon S3 location as per the --s3-bucket value.

sam package --template-file AppProject.yaml --s3-bucket dev-app-deploy --output-template-file ./AppProject/bin/Release/netcoreapp3.1/linux-x64/publish/packaged-template.yml

The "-template-file" argument takes the path to our root template.

The "--s3-bucket" take the target S3 bucket to upload the processed child templates. This will vary depending on the dev/uat/prd environment.

"output-template-file" contains the path and name of the processed root template that CloudFormation will use in the Pipeline deploy stage.

So all this command does is, it uploads the child template to the mentioned S3 bucket and replace the relative path of the child template with the S3 URL

Before SAM package:

AppModuleA:

Type: AWS::CloudFormation::Stack

Properties:

TemplateURL: ./Templates/ModuleA.yaml

After SAM package:

AppModuleA:

Type: AWS::CloudFormation::Stack

Properties:

TemplateURL: https://s3.amazonaws.com/dev-app-deploy/fe46e3afa38c476586dfa3ffd0dc4484.template

The updated root template (packaged-template.yml) is stored in the path defined under "output-template-file". Make sure the path defined here is the same as the "base-directory" under the Artifact section of the buildspec file. This will put the "packaged-template.yml" file in the root of the BuildArtifact.

BuildArtifact is a zip of all the files defined under the "artifacts > files" section. And AWS Pipeline passes it over to the next stage as input

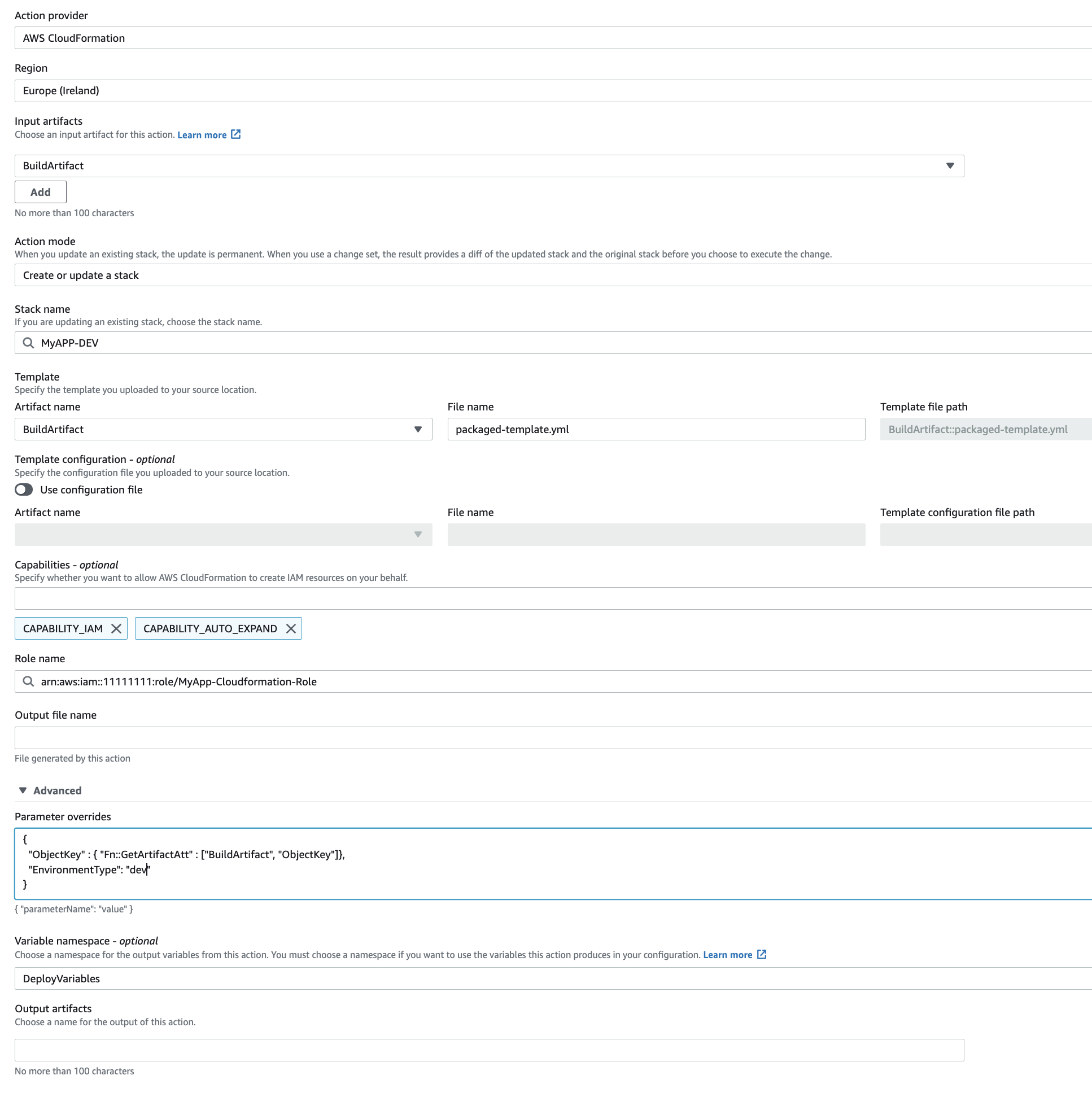

STEP 4 Deploy: The resources will be deployed at this stage using CloudFormation.

Action Provider: Select Cloudformation

Region: Autoselected but make sure its the AWS region you want for deployment.

Input artifacts: Select "BuildArtifact" or the name of the "Output artifact" if changed at the build stage (step 3).

Action Mode: Select "Create or update a stack"

Stack name: Provide a relevant name for the Cloudformation stack.

Template: Points to the "output-template-file" name from the sam package command ran during the build stage.

We created the "packaged-template.yml" file at the root of the "BuildArtifact" in our setup. For that, the configuration will go like this:

Artifact Name: BuildArtifact

Filename: packaged-template.yml (Should be same as the output file from sam package cmd)

The "template file path" field auto-populates BuildArtifact::packaged-template.yml

If the root template file is in a folder within BuildArtifact than in the "Filename" field we need to prefix the folder name like:

AppTemplateFolder/packaged-template.yml

The template file path field will be something like this:

BuildArtifact::AppTemplateFolder/packaged-template.yml

Capablitities: Select "CAPABLITY_IAM" & "CAPABILITY_AUTO_EXPAND". CAPABILITY_AUTO_EXPAND is required for parameter override and passing the params to nested stacks. "CAPABLITY_IAM" is necessary to link IAM Role to Lambdas.

Role Name: Select CloudFormation role with permissions to create resources and read access for the deploy S3 bucket.

parameter overrides: Expand "Advance" and under "Parameter overrides" paste in below code:

{

"ObjectKey" : { "Fn::GetArtifactAtt" : ["BuildArtifact", "ObjectKey"]},

"EnvironmentType": "dev"

}

CodePipeline copies and writes files to the Pipeline's artifact store (an S3 bucket) when running a pipeline. CodePipeline auto-generates the file names in the artifact store, and these file names are unknown before you run the Pipeline. "Fn::GetArtifactAtt" allows us to extract information like Artifact filename or bucket.

"ObjectKey" : { "Fn::GetArtifactAtt" : ["BuildArtifact", "ObjectKey"]}

The above code retrieves the file path of the BuildArtifact from the S3 root level and stores it in the "ObjectKey". The value may look something like this: "MyAppPipeline/BuildArtif/3fef3453"

The "EnvironmentType" variable overrides the default "EnvironmentType" value. For example: We will set it to "EnvironmentType": "uat" for the UAT environment.

For the development environment, it's not required, as we set the "default" value of "EnvironmentType" to "dev" but included it here for consistency purposes.

Although in our Pipeline, we worked on a custom-defined S3 bucket. But if under "Step 1 Settings" we have left the "default location", then Pipeline would have created a new S3 bucket to store the Artifact.

In the Child template, CodeUri requires both the S3 bucket and BuildArtifact name. So to fetch the S3 bucket name at this stage, we can again use the "Fn::GetArtifactAtt" but this time with the "BucketName" key, it returns the S3 bucket name containing the BuildArtifact.

{

"ObjectKey" : { "Fn::GetArtifactAtt" : ["BuildArtifact", "ObjectKey"]},

"BucketName" : { "Fn::GetArtifactAtt" : ["BuildArtifact", "BucketName"]},

"EnvironmentType": "dev"

}

To further clarify the format, the values on the left are the variables defined by us and can be any name, but the values passed to "Fn::GetArtifactAtt" are pre-defined. You can read more about the function here.

{

"MyAppObjectKey" : { "Fn::GetArtifactAtt" : ["BuildArtifact", "ObjectKey"]},

"MyPipelineBucketName" : { "Fn::GetArtifactAtt" : ["BuildArtifact", "BucketName"]},

"EnvironmentType": "dev"

}

Variable namespace: As we are using parameter override, pls make sure "Variable namespace" is defined. It could be any string. The default value is "DeployVariables" in the "Deploy" stage.

Click "Next". Review the settings and if all seems good, create the Pipeline. The Pipeline will auto-execute to pull data from the repo defined in the "Source" step. If all the configs are correct, the build should work, and Cloudformation will deploy resources successfully.

Tshoot pointers

We created three roles in our Pipeline.

Service Role: Defined in step 1. If we use a "Custom location", we need to ensure this role has "write" access to the S3 bucket. This role is used to create a copy of the source code referred as "SourceArtifact" from the code repo.

Build Service Role: Defined in step 2 when creating the project. This role is used to create "BuildArtifact" from the SourceArtifact. So it needs "read and write" access to the S3 bucket used by the Pipeline.

Deploy Role: CloudFormation uses this role to deploy resources, so not only does this role need correct deployment access but also require read access from the Pipeline S3 bucket, to read the templates.Check build output.

Projects often fail during build due to incorrect path defined in the buildspec. Navigate to "Build projects" > Build project > "Select the project linked with Pipeline > Click on the latest "Build run" to check the detailed output for error details. Another point to check is if the name of the Build Artifact defined under the "Output artifacts" field is correctly referenced. The default is "BuildArtifact", as we used in this example. If it's a different value, make sure the reference is consistent across all steps.

Failed "sam package" command.

The "sam package" command may fail if the path to the source template file is incorrect. In our example, we just mentioned the "AppTemplate.yaml" this means the root template is available at the root of "SourceArtifact". To check, navigate to the S3 bucket configured in Step 1. There will be a folder with the Pipeline name. It should contain two sub-folders: "BuildArtif" (contains build zip files) and "SourceArti" (a zip of the source files copied from Pipeline Source). We can download these files, add the ".zip" extension, and unzip to access the contents.

Download the SourceArtifact with the latest timestamp, unzip and check the location of the root template. Input the correct path in the sam package command.

The command may also fail if the "IAM role" used by the project does not have written access to the S3 bucket defined in the command parameter.Deployment Error.

If the Cloudformation stack exists in the "Rollback Complete" stage due to previous unsuccessful attempts. We will need to manually delete the stack before Pipeline retry. Another reason deployment may fail is if the IAM role linked with CloudFormation does not have rights to read the S3 URLs of the Child templates.

I hope this article will assist in successfully deploying the Pipeline across multiple environments. If you have any questions, please leave a comment or DM on Twitter.